ECE 5725 Coivd Access Control System

INTRODUCTION

2020 has been a tough year, we all have been through a lot. The pandemic we are facing has changed our life greatly. It is important to keep safe living on Campus at Cornell University around the Ithaca areas. Here we came up with this idea to develop the Covid-19 Access System not only to protect ourselves but also to be responsible to others and to society. It can be implemented in the entrance of School Libraries, School Facilities, and ECE Building Front Doors.

We designed our project to meet the following criteria: When visitors come in front of our system, a face mask must be detected to proceed to the next step. After the successful detection of a face mask, the body temperature module is initialized to make sure the visitor is not having a fever. Afterward, the fingerprint module is used to check the identity of the visitor to make sure that the visitor is: a Cornellian, covid-negative, and has followed the conduct code from Cornell University. After a full check from the above information, the door can be unlocked and the visitor will gain access to the school facilities.

OBJECTIVE

The goal of the project is to use the Raspberry Pi processor to develop an covid access control embedded system with mask facial recognition, body temperature data monitoring, fingerprint info verification, and low power consumption. The project should implement an embedded system based on Cornell’s student database information and the epidemic situation.

DESIGN & TESTING

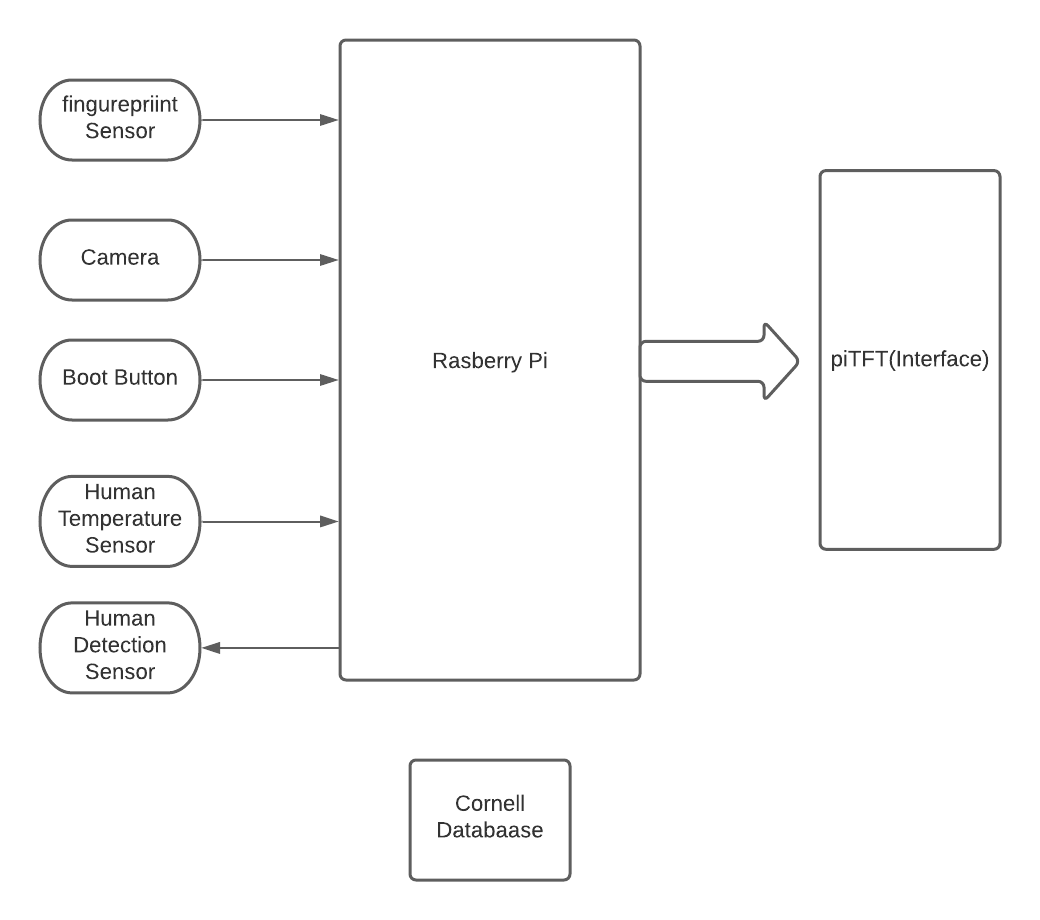

Overall Design

The system uses Raspberry Pi as an embedded system formed by the central processing unit. As the center, the main function is a platform for processing data, storing data and data visualization, and interacting with users. It plays a very key role in the entire system. As shown in the figure, the Raspberry Pi uses the data collected by the camera to recognize the mask through the machine learning model we designed. Through the combination with the human body temperature sensor, the body temperature of the human body is collected, thereby ensuring that the human body temperature is within a normal range. Through the fingerprint acquisition sensor, the visitor's fingerprint data is collected, and the corresponding data information is found in our simulated Cornell database through the fingerprint data. The permissions given to the current user based on the read data, such as whether he has a virus, or whether he has completed the daily check required by the school. The most important feature of our system design is the low power consumption mode. When the system is in the absence of users, if the human sensor does not sense the appearance of a person for a period of time, the system will automatically enter the shutdown state, and when a new person appears At some point, the system will restart to work, so that the system can achieve low power consumption.

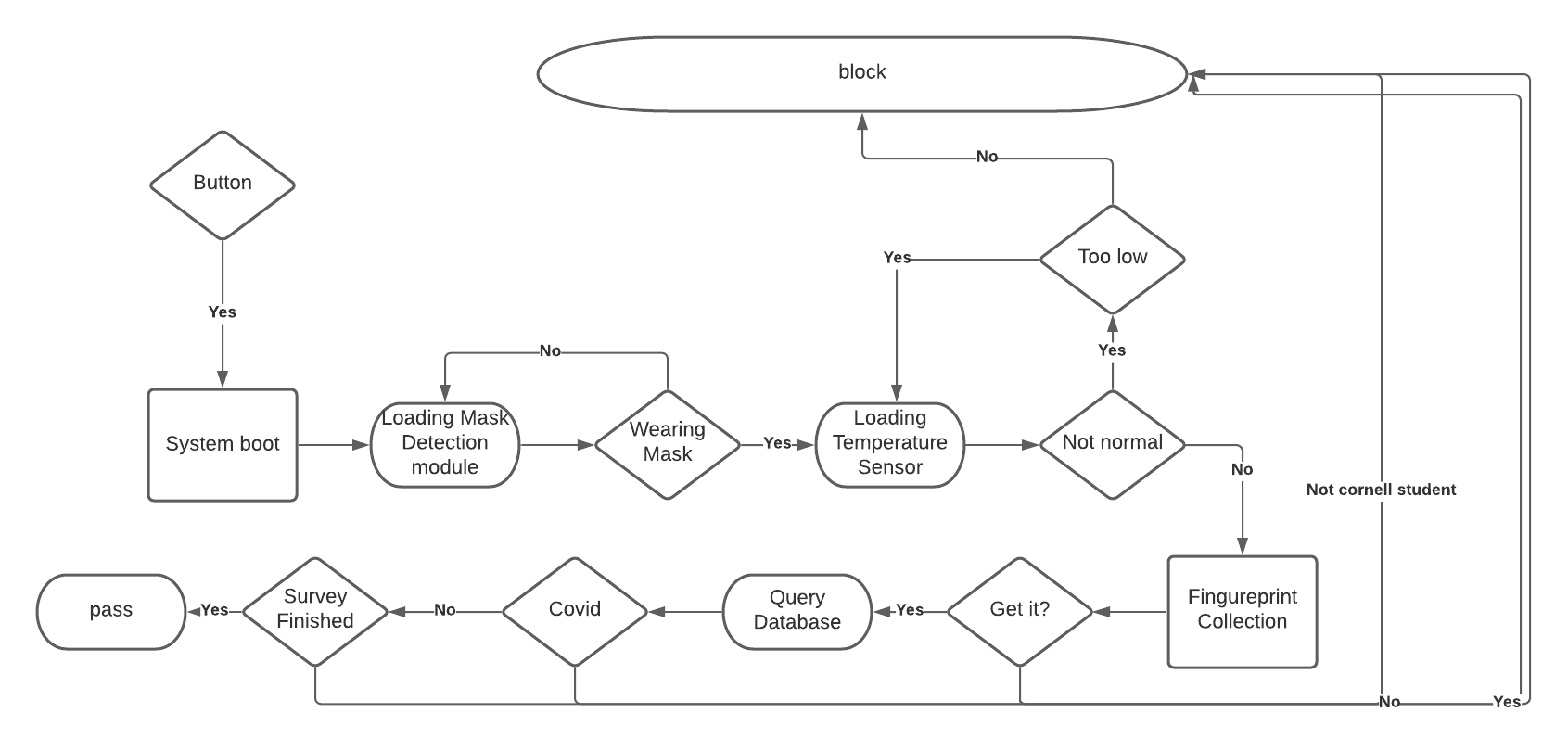

The following diagram illustrates the workflow and logic of the entire system. From the initial system initialization to entering the mask recognition module, if the user does not wear a mask, the system will stay in the mask recognition part. Only after the mask is successfully recognized, the system will enter the body temperature detection link. It will recognize the body temperature of the person. When the body temperature is too low, the system will remind you to bring your forehead close to the system. When the body temperature is abnormally high, the system will not give permission. When the body temperature is normal, the system starts to collect fingerprints. Only when the user puts down his thumb, the system starts fingerprint proofreading. The following situations may occur. It is not the case that the student's condition of the school is abnormal, and the daily check is not completed. The system will respond to different situations. To issue permissions.

Face Mask Recognition

A Pi Camera is our first thought to use to achieve this goal and it has been proved to be valid. Since the popularity and mature modules of Deep Learning and neural networks, it is not only the advanced references we have access to for us to use ML/DL, but also can improve the accuracy for detection.

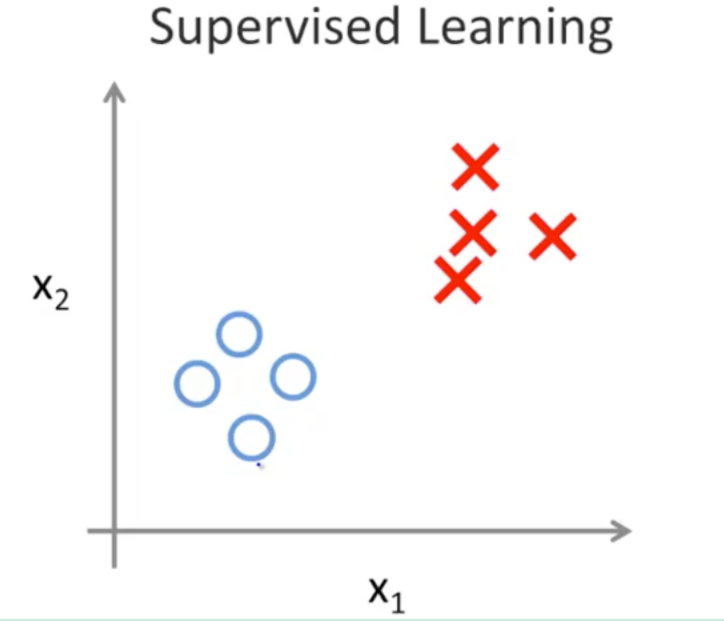

This type of problem to identify as “yes” or “no” can be classified as a supervised learning problem since we have clearly two categories: wearing a mask; not wearing a mask. Where in the above figure, circles can be represented as “Wearing a Mask” and crosses can be represented as “Not Wearing a Mask” And it is from this similarity we are confident that it is a good idea to use ML and not cause a Hope Creep since we have only 2 categories.

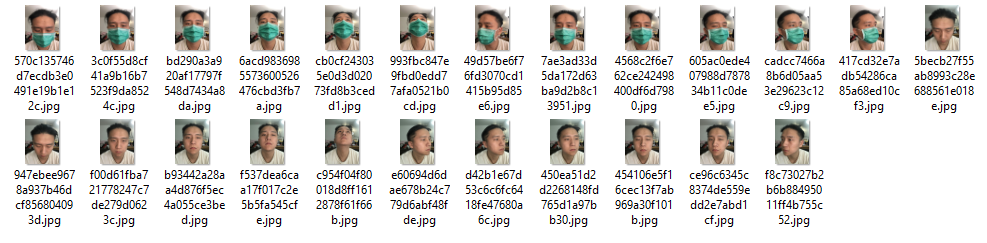

We used the supervised learning module online to train our problems. Where our training samples are listed below:

To integrate this part into a bigger picture, modifications are performed. To improve the speed and smoothness of the identification procedure, we no longer show the above video streams on the PiTFT.

when considering the real situation, it might take the visitor more time to bring out the mask. We then changed the logic to be: the algorithm will keep on looping until a face mask is detected. This makes sense even if the first visitor has left because he does not has a mask on, the next visitor can directly come in front without having to wait extra time to wait for the system to reboot.

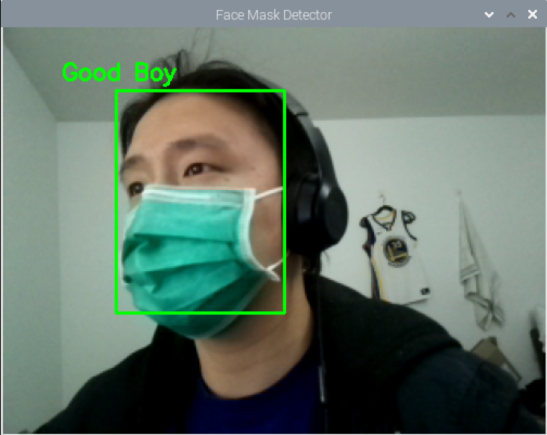

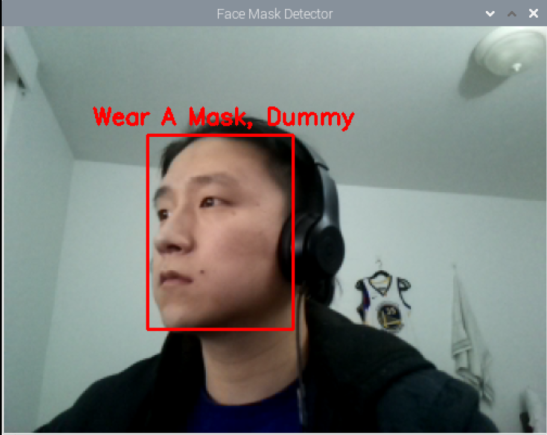

We set up the device downloading OpenCV, TensorFlow and after training the machine learning module, our test result can be seen as follow:

When a face mask is on:

When a face mask is off:

Body Temperature Module

An I2C sensor is our first thought to use to achieve this goal and it has been proved to be valid. This is a trivial problem since if the module is connected correctly, it will be able to measure temperatures from the surrounding.

Since there is a little loss in the connecting pins, with the help from Professor Skovira, we weld them together to provide stable connections. One more thing to keep in mind is the address of the module if we want to address it in the python code, the following is what we get from the official manual:

where we can tell that the slave address of the I2C module is 5A.

Since the module we use is: MLX90614, we downloaded its package from the following address: https://pypi.org/project/PyMLX90614/#files

The following is the test to see if we have correctly connected the module and to double-check the address of the sensor:

After checking the slave address, we test the code to measure the temperature and the following is what we get:

To integrate this part into a bigger picture, we made this program run for 3s and count how many times we have measured and taken the average of it. Situations are considered if the visitor is too far away from the sensor and the temperature measured is too low for a human being. If this happens, the system will re-loop back to start again to measure the temperature of the visitor until valid data is required.

Fingureprint Module

The fingerprint sensor we used is AS608. The AS608 fingerprint recognition module is a high-performance optical fingerprint module with a built-in chip. The chip has a built-in DSP arithmetic unit, which can capture images quickly and efficiently. The module is equipped with a serial port and USB communication interface, which can be used on a variety of platforms and can be very close to our design. Our system development uses serial communication. The structure diagram of AS608 is as follows.

We found the most effective way to connect with the Raspberry Pi is to use the USB TLL adapter. This module would transfer the TX RX signal as a serial port signal which is easier to handle on the Raspberry pi. So there are serveral steps for having the USB signal. The first step is taht we need to get the port information to check the device we used. The port frequency should be setup as 57600 which is the maximum and the most effective frequency that module would take and then set up the hardware flow control as no.

The original image collected from sensor is as blow:

Then we did the image processing for the collected image. The general steps for processing the fingerprint image are Pre-processing, this is to normalize the collected fingerprint image, mainly two steps, mainly to ensure that the size of the image is consistent with the gray value of the image. To adjust the gray value, I use the mean-variance of the entire image to adjust to a certain range. Figure 1.6 is the image after normalization.

The second step in the algorithm is to extract feature points. The points we select are the endpoints and intersections. We traverse each pixel of the refined map. The endpoint is determined by subtracting the absolute value of the points in the eight areas. If the value is 2, it is the endpoint. Figure 1.7 is the image after processing.

The fingerprint acquisition module has its own processor and registers. We will store the processed data in the registers. We can operate fingerprint acquisition, fingerprint verification and query through serial communication instructions, and finally return a fingerprint ID. And this ID as a field in our database is also the key information for us to query the database.

Our databased and the corresponding filds are shown blow.

Low power Module

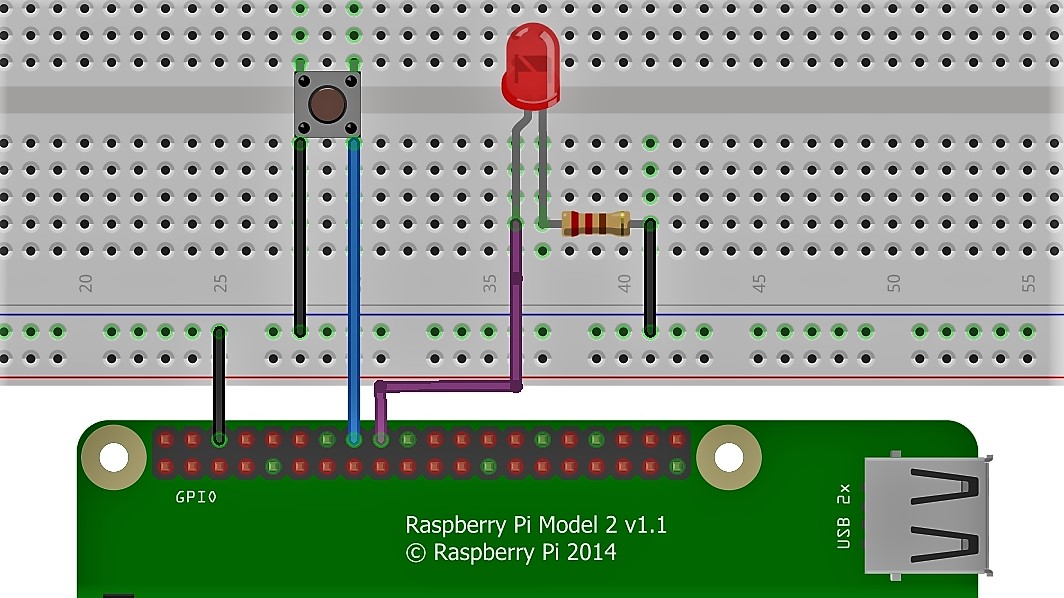

Through investigation and experimentation, it is found that Raspberry Pi 4 has a new feature. When pin 3 is connected to ground, the system will enter the restart state. According to the new features of this system, we have designed the power button, and the system will restart by triggering the button. Startup, when there is no one in front of the system, the system is in low power consumption mode. When a new person appears, the system can be started by only the start button, and the entire project can be automatically executed after the system is started, which can ensure the user’s Experience and feel. After testing, the button start system is very consistent with the low power consumption mode of the system. The connnection between button with raspi is shown as figure.

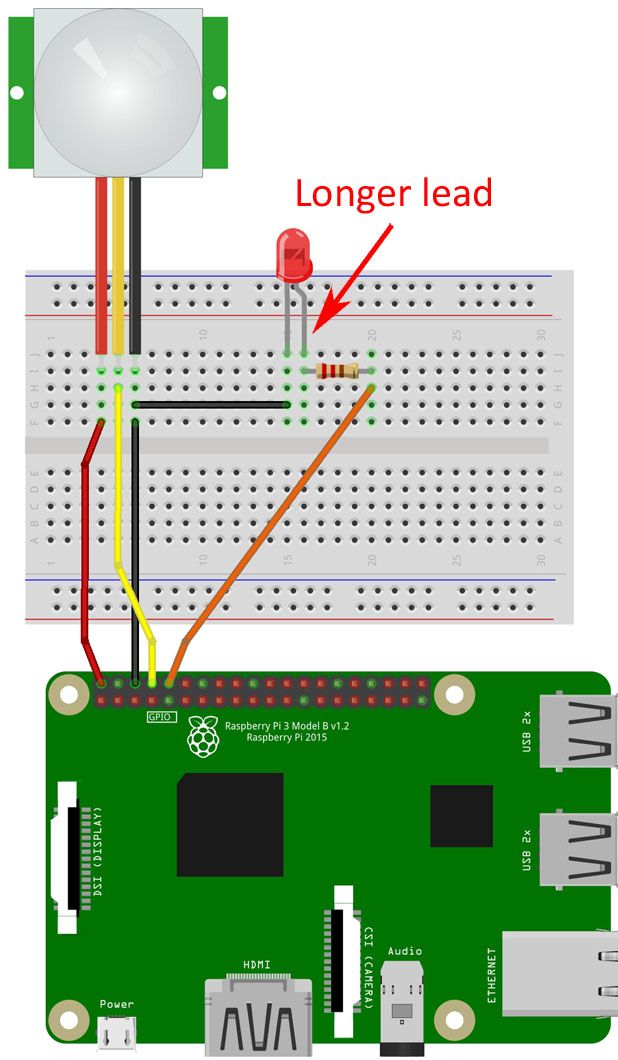

Since the system needs to work at the door of the building facility for 24 hours, but not everyone can use the system at all times. Uninterrupted work for a long time will damage the service life of the system, and the system will continue to consume energy. So we proposed In addition to energy-saving design, infrared human sensors are used to monitor the use of equipment. Our design idea is that when the system does not receive the output from the sensor within a certain period of time, it is assumed that there is no one in front of the device and the system starts the shutdown state, thereby reducing the energy consumption of the system and improving the user experience. The connection method of the sensor is shown in the figure below.

Interface Design

Since we already have a PiTFT at hand, we decided to use this as a user interface where it will show instructions and proceedings to provide information to the visitors. We plan to use a black background with a Cornell logo on top where just below that, we will put texts.

The following shows our welcome page where we took screenshots from VNC-Viewer by running the program. A full version of it can be seen in our videos.

RESULT

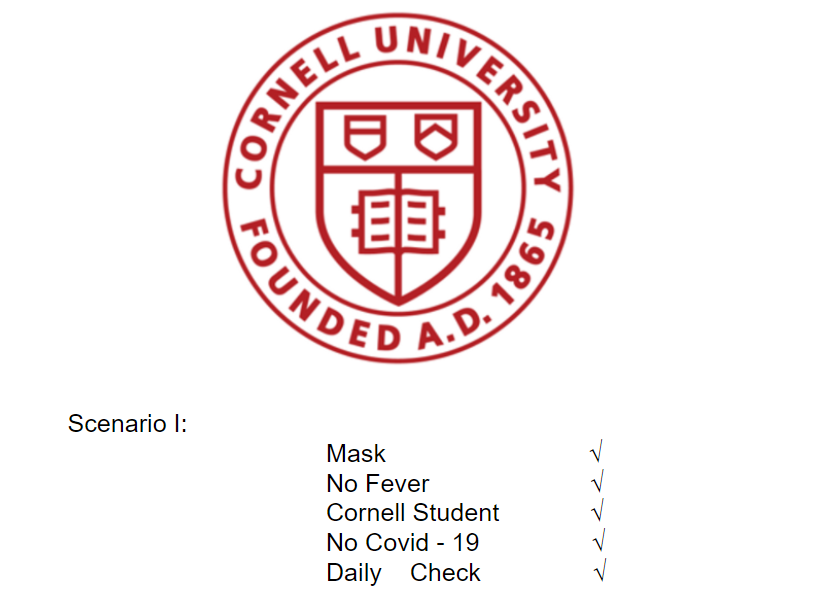

- Scenario 1:

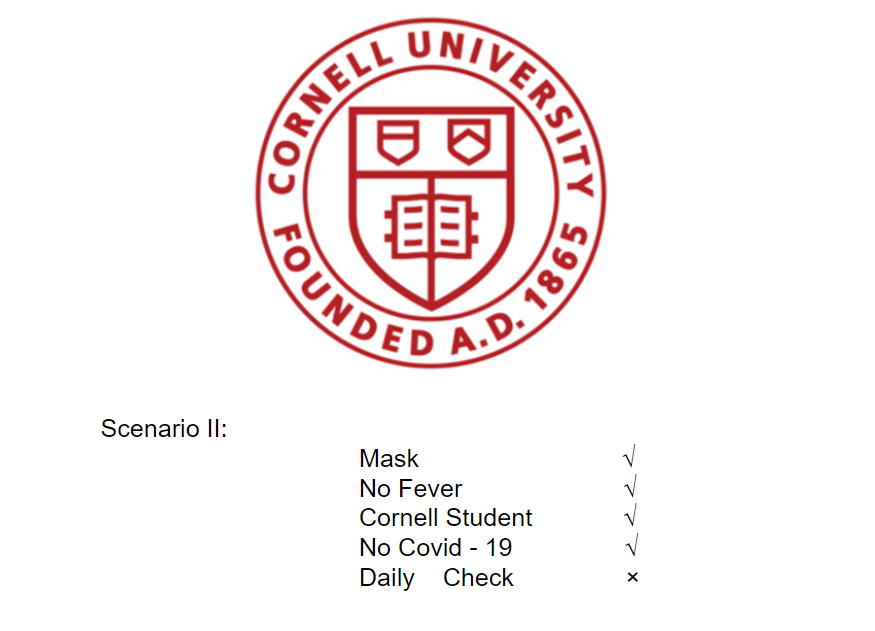

- Scenario 2:

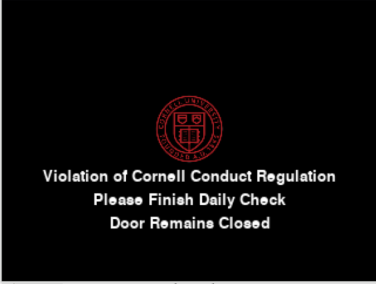

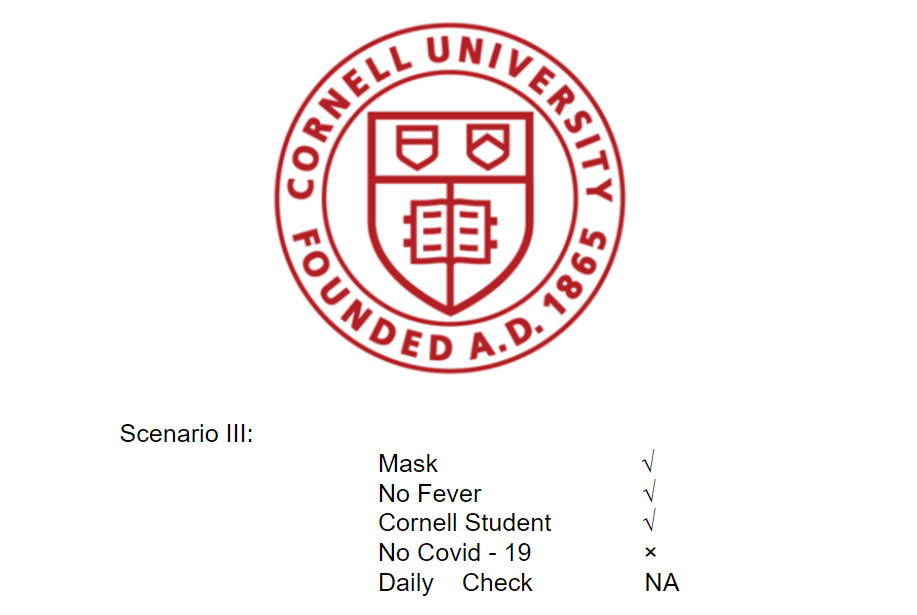

- Scenario 3:

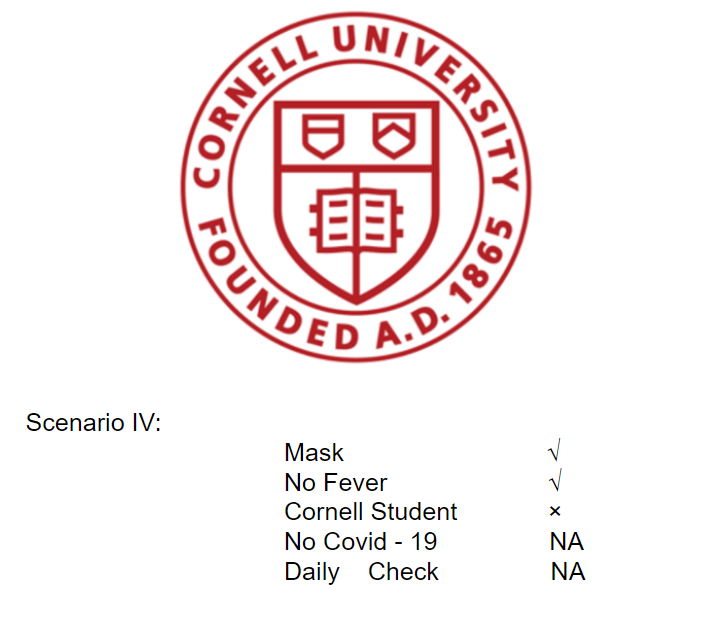

- Scenario 4:

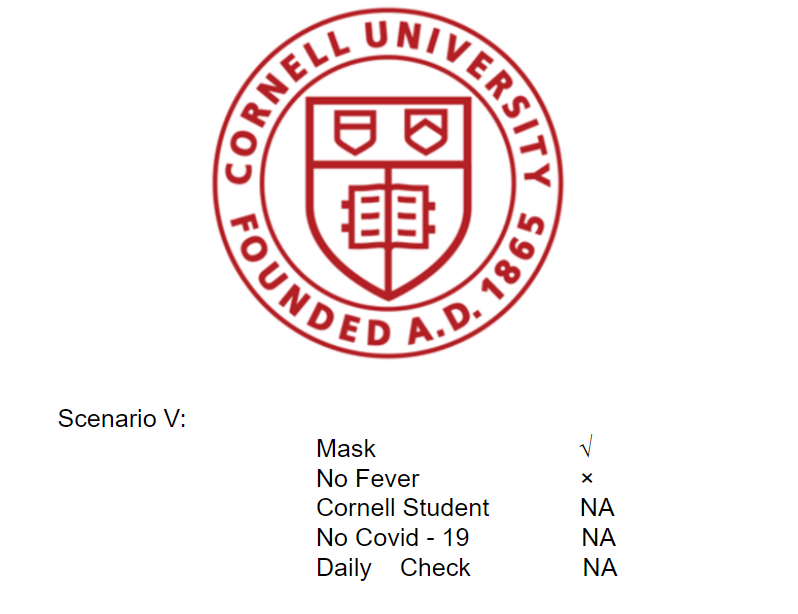

- Scenario 5:

In this scenario, the visitor is wearing a mask, and his/her body temperature is normal. He/she is from Cornell and has both tested negative in covid-test and has completed the daily check required by the university.

The following shows a result in this scenario where we took screenshots from the VNC-Viewer by running the program

In this scenario, the visitor is wearing a mask, and his/her body temperature is normal. He/she is from Cornell and has tested negative in covid-test however, has not completed the daily check required by the university.

The following shows a result in this scenario where we took screenshots from the VNC-Viewer by running the program

In this scenario, the visitor is wearing a mask, and his/her body temperature is normal. He/she is from Cornell however, is tested covid-positive.

The following shows a result in this scenario where we took screenshots from the VNC-Viewer by running the program

In this scenario, the visitor is wearing a mask, and his/her body temperature is normal. However, He/she is not from Cornell University.

The following shows a result in this scenario where we took screenshots from the VNC-Viewer by running the program

In this scenario, the visitor is wearing a mask, but he/she is having a fever

The following shows a result in this scenario where we took screenshots from the VNC-Viewer by running the program

Parts

| # | Item | Parameter | unit | price |

|---|---|---|---|---|

| 1 | piTFT | None | 1 | Borrowed |

| 2 | Raspberry Pi | Version 4 | 1 | Borrowed |

| 3 | Infared sensor | HC-SR501 | 1 | 6.99 |

| 4 | Raspberry Camera | None | 1 | 22.59 |

| 5 | Human Temperature sensor | None | 1 | 10.88 |

| 6 | Fingureprint sensor | AS608 | 1 | 11.88 |

Conclusion & Future work

- We are able to design a complete and working project where it can check and verify the visitors to a school facility. The Covid-19 Access System is able to: detect a face mask on the visitor; measure the temperature; verify the visitor’s authentication and check if the visitor has followed the conduct code from Cornell University. The future work of our project includes a well-allocated appearance since we are only able to build the scratch out from a cupboard; a more advanced database to store more information as right now 300 is the maximum set of information we are able to store.

reference

- OpenCV: https://opencv.org/

- imutils 0.5.3: https://pypi.org/project/imutils/

- TensorFlow: https://www.tensorflow.org/

- MLX90614 data sheet: https://www.sparkfun.com/datasheets/Sensors/Temperature/MLX90614_rev001.pdf

- PyMLX90614 Library: https://pypi.org/project/PyMLX90614/#files

- RPi 4 Bootloader config https://www.raspberrypi.org/documentation/hardware/raspberrypi/bcm2711_bootloader_config.md/

Code

# import the necessary packages from tensorflow.keras.applications.mobilenet_v2 import preprocess_input from tensorflow.keras.preprocessing.image import img_to_array from tensorflow.keras.models import load_model from imutils.video import VideoStream import pygame # Import pygame graphics library import os #for OS calls import RPi.GPIO as GPIO from sys import exit import numpy as np import argparse import imutils import time import cv2 import os import datetime from smbus2 import SMBus from mlx90614 import MLX90614 import binascii import serial import serial.tools.list_ports import time import MySQLdb import threading #database os.putenv('SDL_FBDEV','/dev/fb1') os.putenv('SDL_VIDEODRIVER', 'fbcon') # Display on piTFT def recog(n): #initial welcom page #initizlize pygame: print(n) global Temp Temp = False def check(): global Faver global doCheck Faver = False doCheck = False temp_sum = 0 total = 0 print("Initializing Body Temerature module") ###show on PiTFT my_font = pygame.font.Font(None, 20) my_message = {"Initializing Body Temerature module":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() time.sleep(1) bus = SMBus(1) sensor = MLX90614(bus, address=0x5A) print("Please bring your forehead near Sensor 1") ###show on PiTFT my_font = pygame.font.Font(None, 20) my_message = {"Please bring your forehead near Sensor 1":(160,150), "This might take about 3s":(160,170)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() time.sleep(3) print("Measurement start in 1s") ###show on PiTFT my_font = pygame.font.Font(None, 20) my_message = {"Measuring":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() time.sleep(1) t_end_2 = time.time() + 3 while time.time() < t_end_2: #print ("Object Temperature: ", sensor.get_object_1()) total = total + 1 temp_sum = temp_sum + sensor.get_object_1() body_temp = temp_sum/total print('Your temperature is: ', body_temp) if body_temp > 35: print("You are having a fever") ###show on PiTFT my_font = pygame.font.Font(None, 20) my_message = {"You are having a fever":(160,150), "Door Remains Closed":(160,170)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() time.sleep(3) Faver = True doCheck = False #print (bool(Faver)) return True elif body_temp < 25: print("Remeasuring") ###show on PiTFT my_font = pygame.font.Font(None, 20) my_message = {"Pease bring your forehead closer":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() time.sleep(2) doCheck = True return False else: print("Normal") ###show on PiTFT my_font = pygame.font.Font(None, 20) my_message = {"Normal":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() time.sleep(2) doCheck = False return True # temp ans mask ok def detect_and_predict_mask(frame, faceNet, maskNet): # grab the dimensions of the frame and then construct a blob # from it (h, w) = frame.shape[:2] blob = cv2.dnn.blobFromImage(frame, 1.0, (300, 300), (104.0, 177.0, 123.0)) # pass the blob through the network and obtain the face detections faceNet.setInput(blob) detections = faceNet.forward() # initialize our list of faces, their corresponding locations, # and the list of predictions from our face mask network faces = [] locs = [] preds = [] # loop over the detections for i in range(0, detections.shape[2]): # extract the confidence (i.e., probability) associated with # the detection confidence = detections[0, 0, i, 2] # filter out weak detections by ensuring the confidence is # greater than the minimum confidence if confidence > args["confidence"]: # compute the (x, y)-coordinates of the bounding box for # the object box = detections[0, 0, i, 3:7] * np.array([w, h, w, h]) (startX, startY, endX, endY) = box.astype("int") # ensure the bounding boxes fall within the dimensions of # the frame (startX, startY) = (max(0, startX), max(0, startY)) (endX, endY) = (min(w - 1, endX), min(h - 1, endY)) # extract the face ROI, convert it from BGR to RGB channel # ordering, resize it to 224x224, and preprocess it face = frame[startY:endY, startX:endX] face = cv2.cvtColor(face, cv2.COLOR_BGR2RGB) face = cv2.resize(face, (224, 224)) face = img_to_array(face) face = preprocess_input(face) # add the face and bounding boxes to their respective # lists faces.append(face) locs.append((startX, startY, endX, endY)) # only make a predictions if at least one face was detected if len(faces) > 0: # for faster inference we'll make batch predictions on *all* # faces at the same time rather than one-by-one predictions # in the above `for` loop faces = np.array(faces, dtype="float32") preds = maskNet.predict(faces, batch_size=32) # return a 2-tuple of the face locations and their corresponding # locations return (locs, preds) pygame.init() WHITE = 255,255,255 black = 0,0,0 size = width,height = 320,240 screen = pygame.display.set_mode((320,240)) ball = pygame.image.load("Cornell_1.png") ballrect = ball.get_rect() my_font = pygame.font.Font(None, 30) my_message = {'Covid-Access System':(160,150)} for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() # display workspace on screen time.sleep(2) # construct the argument parser and parse the arguments ap = argparse.ArgumentParser() ap.add_argument("-f", "--face", type=str, default="face_detector", help="path to face detector model directory") ap.add_argument("-m", "--model", type=str, default="mask_detector.model", help="path to trained face mask detector model") ap.add_argument("-c", "--confidence", type=float, default=0.5, help="minimum probability to filter weak detections") args = vars(ap.parse_args()) # load our serialized face detector model from disk print("[INFO] loading face detector model...") ### show on PiTFT my_font = pygame.font.Font(None, 20) my_message = {"[INFO] loading face detector model...":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() prototxtPath = os.path.sep.join([args["face"], "deploy.prototxt"]) weightsPath = os.path.sep.join([args["face"], "res10_300x300_ssd_iter_140000.caffemodel"]) faceNet = cv2.dnn.readNet(prototxtPath, weightsPath) # load the face mask detector model from disk print("[INFO] loading face mask detector model...") ###show on PiTFT my_font = pygame.font.Font(None, 20) my_message = {"[INFO] loading face mask detector model...":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() maskNet = load_model(args["model"]) # initialize the video stream and allow the camera sensor to warm up print("Starting video stream...") ###show on PiTFT my_font = pygame.font.Font(None, 20) my_message = {"Starting video stream":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() #vs = VideoStream(src=0).start() vs = VideoStream(usePiCamera=True).start() time.sleep(2.0) global mask_on mask_on= False global temp_sum temp_sum = 0 # loop over the frames from the video stream # print ("Start to Identify Mask") my_font = pygame.font.Font(None, 20) my_message = {"Start to Identify Mask":(160,150)} speed = [0,0] screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() t_end = time.time() + 10 global hasMask hasMask = False while not hasMask: pygame.display.flip() # display workspace on screen # grab the frame from the threaded video stream and resize it # to have a maximum width of 400 pixels frame = vs.read() frame = imutils.resize(frame, width=500) # detect faces in the frame and determine if they are wearing a # face mask or not (locs, preds) = detect_and_predict_mask(frame, faceNet, maskNet) # loop over the detected face locations and their corresponding # locations #print ("Start to Identify Mask") for (box, pred) in zip(locs, preds): # unpack the bounding box and predictions (startX, startY, endX, endY) = box (mask, withoutMask) = pred # determine the class label and color we'll use to draw # the bounding box and text if mask > withoutMask: #label = "Thank You. Mask On." hasMask = True print ("Good boy") my_font = pygame.font.Font(None, 20) my_message = {"Good boy":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() #color = (0, 255, 0) else: #label = "No Face Mask Detected" #color = (0, 0, 255) print ("Wear a mask, dummy ", bool(hasMask)) my_font = pygame.font.Font(None, 20) my_message = {"Wear a mask, dummy":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() #label = "Thank you" if mask > withoutMask else "Please wear your face mask" #color = (0, 255, 0) if label == "Thank you" else (0, 0, 255) # include the probability in the label #label = "{}: {:.2f}%".format(label, max(mask, withoutMask) * 100) # display the label and bounding box rectangle on the output # frame #own: #cv2.putText(frame, label, (startX-50, startY - 10), #cv2.FONT_HERSHEY_SIMPLEX, 0.7, color, 2) #cv2.rectangle(frame, (startX, startY), (endX, endY), color, 2) # show the output frame #own: #cv2.imshow("Face Mask Detector", frame) #key = cv2.waitKey(1) & 0xFF # if the `q` key was pressed, break from the loop #if key == ord("q"): #break # do a bit of cleanup cv2.destroyAllWindows() vs.stop() if hasMask is True: print ("The visitor is wearing a mask") ###show on PiTFT my_font = pygame.font.Font(None, 20) my_message = {"The visitor is wearing a mask":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() time.sleep(2) while not check() and not Faver: print("returning") print(Faver) if Faver: print ("Stops") close() else: #database info check print("next") global serial serial = serial.Serial('/dev/ttyUSB0', 57600, timeout=0.5) #/dev/ttyUSB0 def recv(serial): while True: data = serial.read_all() if data == '': continue else: break return data if serial.isOpen() : #kaishi caiji zhiwen print("Connected with fingure") my_font = pygame.font.Font(None, 20) my_message = {"Start to Check Student Info":(160,150)} speed = [0,0] screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() time.sleep(1) else : print("fail Connected") while True: a = 'EF 01 FF FF FF FF 01 00 03 01 00 05' d = bytes.fromhex(a) serial.write(d) time.sleep(1) data =recv(serial) if data != b'' : data_con = str(binascii.b2a_hex(data))[20:22] if(data_con == '02'): #add print("Please put your Fingure") my_font = pygame.font.Font(None, 20) my_message = {"Please Place your Thumb":(160,150)} speed = [0,0] screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() time.sleep(1) elif(data_con == '00'): #add print("Loading") my_font = pygame.font.Font(None, 20) my_message = {"Checking Fingureprint Info":(160,150)} speed = [0,0] screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() time.sleep(1) buff = 'EF 01 FF FF FF FF 01 00 04 02 01 00 08' buff = bytes.fromhex(buff) serial.write(buff) time.sleep(1) buff_data = recv(serial) buff_con = str(binascii.b2a_hex(buff_data))[20:22] if(buff_con == '00'): #FINISHED my_font = pygame.font.Font(None, 20) my_message = {"Finished Collecting":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() #vs = VideoStream(src=0).start() #vs = VideoStream(usePiCamera=True).start() time.sleep(1.0) serch = 'EF 01 FF FF FF FF 01 00 08 04 01 00 00 00 64 00 72' serch = bytes.fromhex(serch) serial.write(serch) time.sleep(1) serch_data = recv(serial) store = binascii.b2a_hex(serch_data) serch_con = str(store)[20:22] res = str(store)[22:26] print(res) if (serch_con == '09'): #NOT STUDENT print("Sorry you are not cornell student") my_font = pygame.font.Font(None, 20) my_message = {"Sorry, you are not a Cornellian":(160,150), "Door Remains Closed": (160,170)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() #vs = VideoStream(src=0).start() #vs = VideoStream(usePiCamera=True).start() time.sleep(1.0) elif(serch_con == '00'): conn = MySQLdb.connect(host='192.168.0.16',user='root',passwd='password',db='test13') cursor = conn.cursor() sql = "SELECT * FROM student where fingure = '%s'"%(res) print(sql) cursor.execute(sql) data = cursor.fetchone() print(data) cursor.close() print("we find you") name = data[1] print(name) date = data[2] print(date) my_font = pygame.font.Font(None, 20) my_message = {name:(160,150), date:(160,170)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() time.sleep(4) covid = data[3] servey = data[4] if covid: my_font = pygame.font.Font(None, 20) my_message = {"Covid Negative":(160,150)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() #vs = VideoStream(src=0).start() #vs = VideoStream(usePiCamera=True).start() time.sleep(2.0) if servey: print("bu ok") my_font = pygame.font.Font(None, 20) my_message = {"Violation of Cornell Conduct Regulation":(160,150), "Please Finish Daily Check":(160,170), "Door Remains Closed":(160,190)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() #vs = VideoStream(src=0).start() #vs = VideoStream(usePiCamera=True).start() time.sleep(4) else: #pass print("ok") my_font = pygame.font.Font(None, 20) my_message = {"Welcome In":(160,150), "Door Opens": (160,170)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() #vs = VideoStream(src=0).start() vs = VideoStream(usePiCamera=True).start() time.sleep(2.0) else: print("bingle") my_font = pygame.font.Font(None, 20) my_message = {"Covid detected":(160,150), "Sorry, we can't let you in":(160, 170)} screen.fill(black) for my_text, text_pos in my_message.items(): text_surface = my_font.render(my_text, True, WHITE) rect = text_surface.get_rect(center=text_pos) screen.blit(text_surface,rect) ballrect.center = (160,110) screen.blit(ball,ballrect) pygame.display.flip() #vs = VideoStream(src=0).start() #vs = VideoStream(usePiCamera=True).start() time.sleep(1.0) serial.close() close() exit() else: close() print("not good") def count(n): print(n) count = 0; while(True): if GPIO.input(26): count = 0; print("0") else: count = count + 1 print(count) if(count == 20): os.system("shutdown -t 5 now") close() sys.exit() time.sleep(1) def close(): GPIO.cleanup pygame.quit() exit() if __name__ == "__main__": GPIO.setmode(GPIO.BCM) GPIO.setwarnings(False) GPIO.setup(26,GPIO.IN) t1 = threading.Thread(target=count, args=("t1",)) t2= threading.Thread(target=recog, args=("t2",)) t1.start() t2.start() GPIO.cleanup pygame.quit() exit()